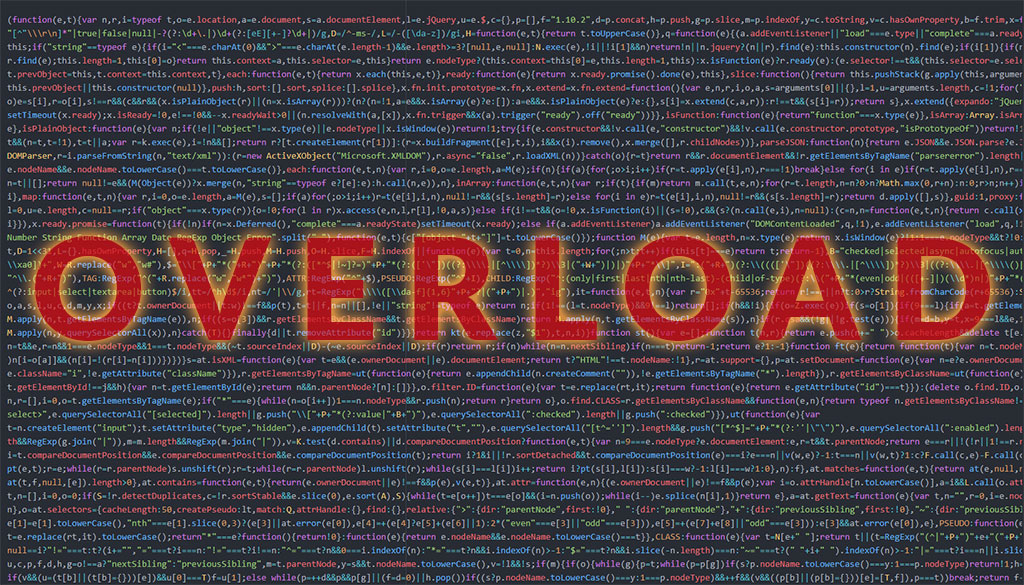

When Too Much is… Well, Too Much

According to Google’s Martin Splitt, a webmaster trends analyst, Google recommends reducing reliance on JavaScript in order to provide the best experience for users. What does this mean to you, the average website owner?

Go with a web design and development firm that uses JavaScript “responsibly” rather than excessively to build your site. In addition, “responsible” use of JavaScript can also help ensure that a site’s content is not lagging behind in Google’s search index. So it will definitely help your rankings. That’s what we are all shooting for… higher placement in searches.

Overuse of JavaScript can seriously drag down the performance of a website.

When your web server has to make calls for so many scripts to run it can effect performance of your website, causing it to drag or delay loading key elements. When crawling a JS-heavy web page, Googlebot will first render the non-JS elements like HTML and CSS. The page then gets put into a queue and Googlebot will render and index the rest of the content when more resources are available.

Relying more on HTML and CSS can help

When it comes to crawling, indexing, and overall user experience its best to rely primarily on HTML and CSS. Because they degrade more gracefully over time they are more likely not to fail or ding your site when crawled.

For more info on the subject check out this video:

Learn how G5 Design can help with Logo Design and establishing your brand, responsive website design, and search engine optimization to help you get ahead in today’s competitive market.

Be sure to follow us on Facebook, Twitter and Linkedin to learn more about Responsive Website Design, Search Engine Optimization, and Logo and Brand Design.